This whole remote learning thing has given me a lot of time to think. Well, maybe not a lot of time, given that I’m trying to plan, teach, and call parents with two toddlers dragging me into a never-ending game of hide-and-seek. But despite this, and the fact that I’m in essence a first-year teacher again, I have been trying my best to lean into this moment and try out some new things.

One of these things has been self-graded quizzes using Google Forms. Amy Hogan uses them a lot and was kind enough to share one of hers with me to get me started. After using hers as an example, I’ve created two in the past two weeks and really like them. I have attached a small grade to the quizzes to encourage kids to do them and take them seriously, but in the future I want them to be gradeless. Because of their self-graded nature, they do take a bit of time and energy to set up, but the flexibility and convenience they offer is pretty sweet. Trying to come up with interesting questions has been fun.

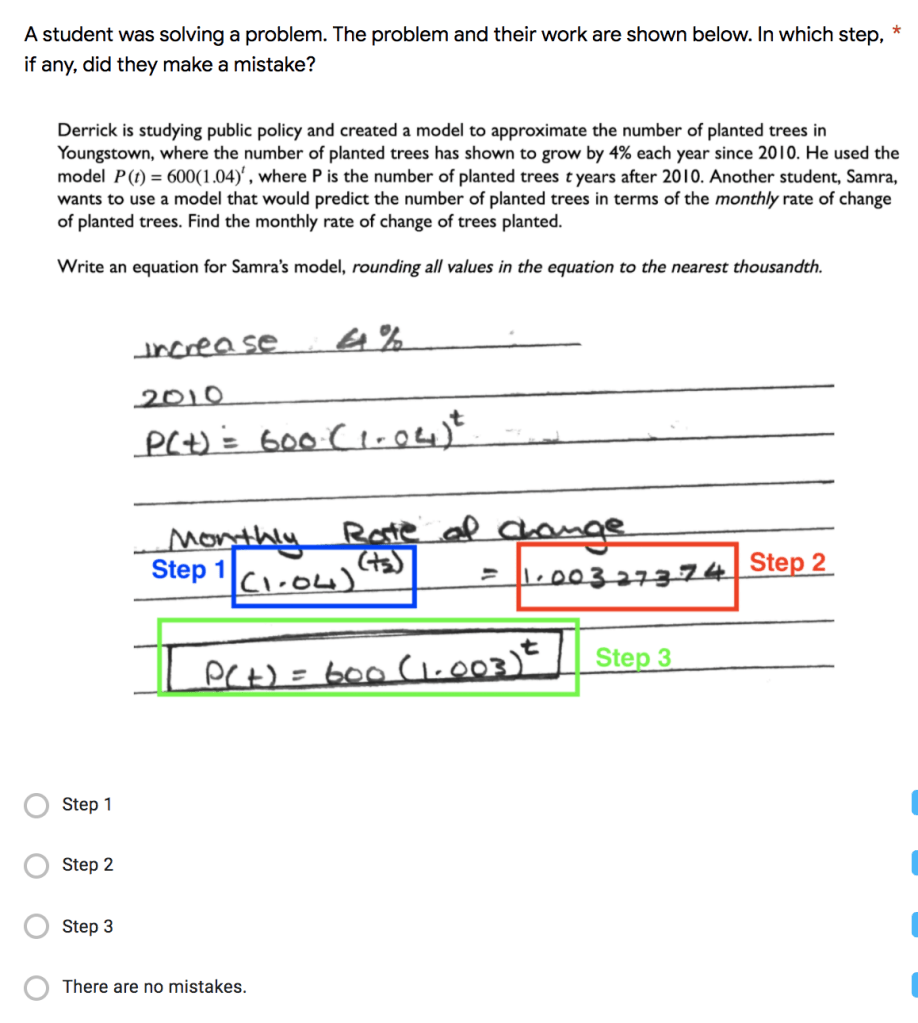

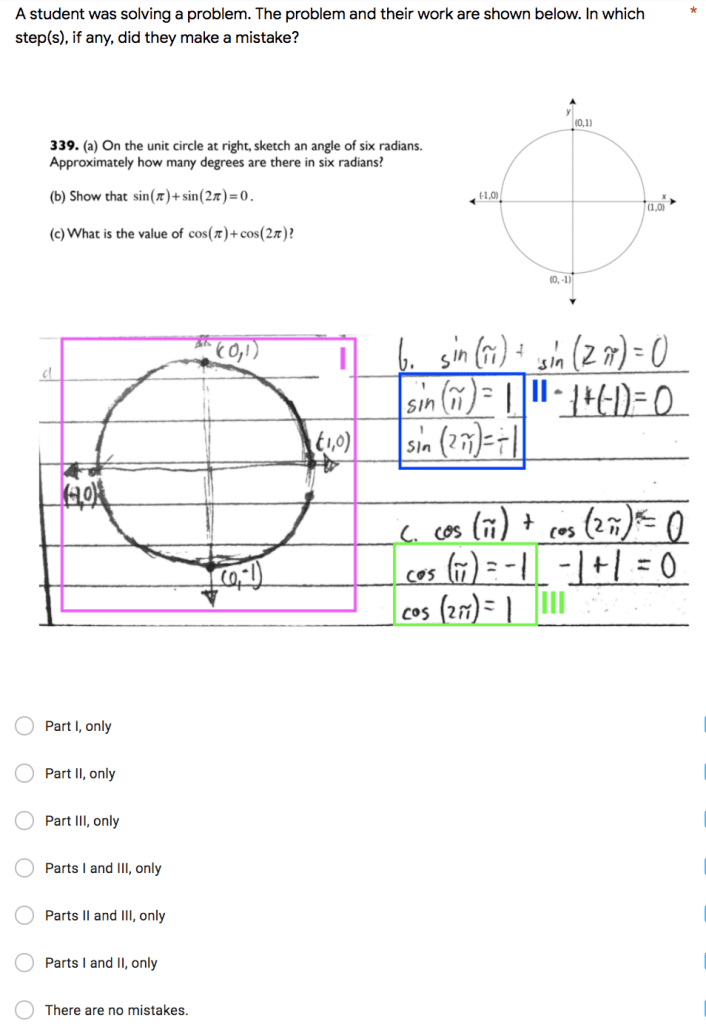

Since I try to leverage student work a lot, I’ve tried to come up with questions that ask students to analyze a piece of student work. Here are two that I conjured up:

As a formative assessment, seeing if my students can find errors in a coherent piece of work is a valuable task, but having the assessment data immediately available is actually what makes these types of questions worth asking. If these were free response questions, it would be a nightmare. I’d have to wade through the mire that is their responses and still wouldn’t have the clean, informative data that the self-graded quizzes give me.

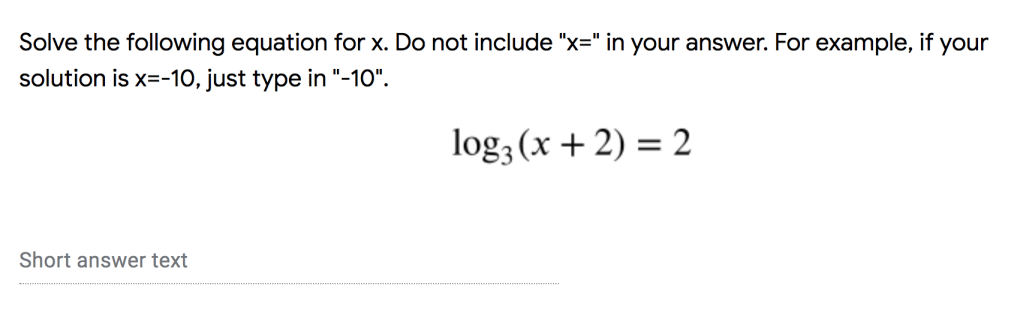

Though I have included a couple free response questions, I’ve been trying to avoid them. Combing through all of their answers to check for equivalent solutions is something I’d rather not do. It defeats the purpose. When I do give a free response question, though, I’m learning how to include in the question what the format of the answer should be. For example:

That worked. Here’s one that didn’t:

The kids submitted all sorts of craziness from “105” to “the answer is 105” to “the remainder will be 105” to “105/x-5.” This turned into me checking through their answers one-by-one, like I would have normally without the use of a self-graded quiz. No fun.

On the other hand, here’s a question I created as a workaround to simply asking students to find the value of csc(π/2):

It’s not a great question by any means (neither are the other two), but at least it’s not free response and it’s a little less Googleable than it otherwise would be. Plus, when combined with the work they submit through Classroom for other problems we do, the question helped give me a loose understanding of what the kids know about radians. Then again, who knows. This is remote learning. Their big sister could be doing these quizzes.

I’m left thinking about how else I can spin my questions. How might the format of the question depend on the math that it’s asking about — and vice versa? What about using response validation? This would take even more work to design, but it is interesting to see where it could go (pun intended). I’m also thinking about using checkboxes in a question with multiple correct solutions and requiring students identify one or all of them. I could also give a multiple choice question, not including the correct answer as one of the choices, but having an “other: ______ ” option where they would enter their answer. This could be a nice alternative to the remainder question I gave above because it would let kids know how to format their answer and could then be easily self-graded by the form.

There’s so much room for creativity in these quizzes that I wish I had more time to explore them. They’re so useful that I’m looking forward to having them follow me back into the classroom, whenever that happens. As for now, it’s back to figuring out where I’m going to hide next. The closet!

bp